Instagram to Notify Parents When Teens Search for Suicide Content

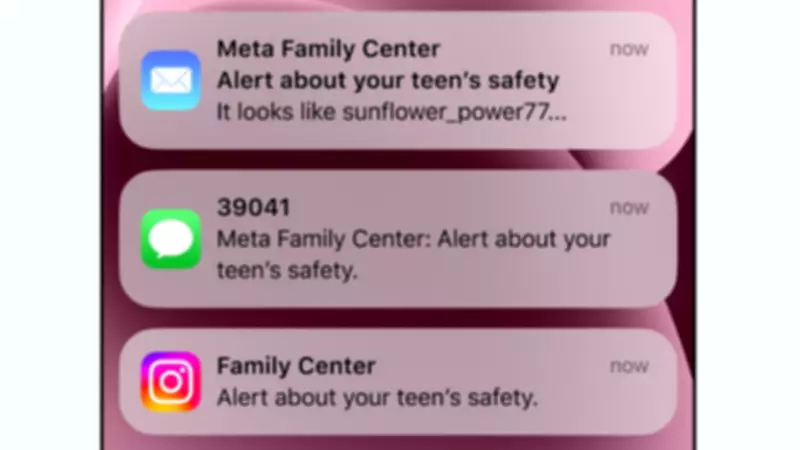

Instagram is set to launch a new feature that will alert parents if their teenage children repeatedly search for content related to suicide or self-harm. The notifications will be sent through various channels, including email, text messages, WhatsApp, or directly within the Instagram app, depending on the parent's settings. This update is part of Meta's parental supervision tools, which are already available in the UK, US, Australia, and Canada.

How the Alert System Works

Parents who have enabled parental supervision on their child's Instagram account will receive alerts if an underage user searches for specific phrases multiple times in a short period. These phrases include terms that promote suicide or self-harm, suggest a desire to self-injure, or simply include words like "suicide" or "self-harm." In addition to the alert, Meta will provide parents with access to expert resources designed to help them navigate sensitive conversations with their teens.

Soon, this system will also extend to interactions with Meta AI, where alerts will be triggered if a young user discusses suicide or self-harm with the AI assistant. Instagram already blocks many search terms related to these topics and includes guardrails in Meta AI to prevent harmful discussions, instead directing users to supportive organizations.

Criticism from Online Safety Experts

Despite these measures, the update has faced criticism from online safety advocates. The Molly Rose Foundation (MRF), a leading charity, has labeled the announcement as "flimsy" and expressed concerns that it could leave parents "panicked and ill-prepared" for difficult discussions. Andy Burrows, chief executive of the MRF, stated, "Every parent would want to know if their child is struggling, but these notifications risk doing more harm than good."

The charity's research indicates that Instagram's algorithm still actively recommends harmful content related to depression, suicide, and self-harm to vulnerable young people. Burrows argued that the focus should be on addressing these algorithmic risks rather than shifting responsibility to parents with what he called a "cynically timed announcement."

Meta's Stance and Ongoing Legal Challenges

Meta defends its policies, stating that it removes content that promotes suicide or self-harm, especially for teens, by hiding discussions of these topics altogether. The company also blocks numerous search terms and directs users to local support organizations. In 2024, Instagram introduced "teen accounts" for users under 16, which require parental permission for setting changes and include enhanced monitoring options agreed upon with the child.

However, Meta is currently embroiled in a significant lawsuit in the US, where it is accused of creating addictive apps that harm young people's mental health. Meta denies these claims, with CEO Mark Zuckerberg asserting in court that the company's goal has always been to build useful services that foster connection.

Support Resources for Those in Need

For individuals feeling emotionally distressed or suicidal, support is available. In the UK, Samaritans can be contacted at 116 123 or via email at jo@samaritans.org. In the US, individuals can call their local Samaritans branch or the national helpline at 1 (800) 273-TALK.