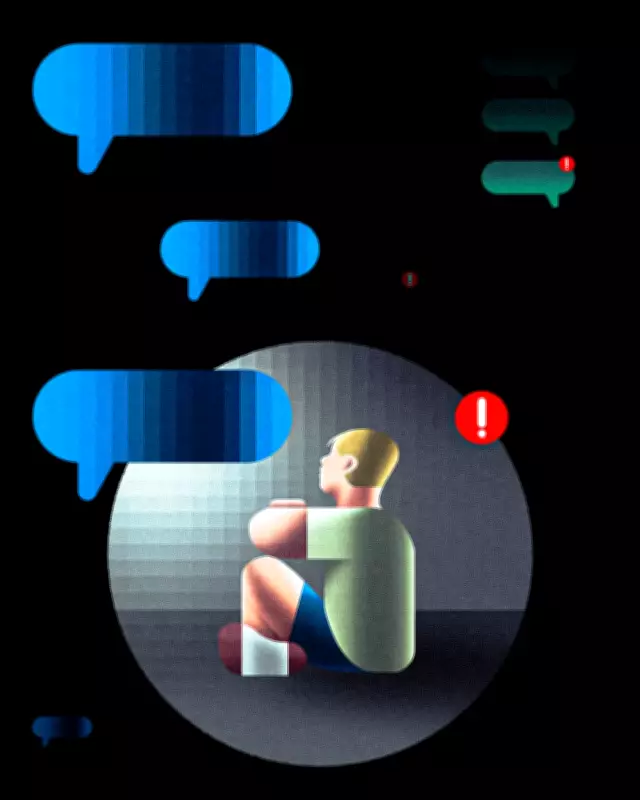

AI School Counselors Track Student Mental Health: Safety Concerns Rise

As hundreds of schools across the United States implement automated monitoring tools to track students' mental health, educators report that many adolescents find confiding in chatbots "more natural" than speaking with human counselors. This technological shift comes amid widespread budget shortfalls and limited mental health staffing in educational institutions.

The Alert That Saved a Life

Brittani Phillips, a middle school counselor in Putnam County, Florida, vividly recalls the evening alert that arrived around 7 p.m. last spring. The artificial intelligence-enabled therapy platform her school uses flagged a "severe" alert for an eighth-grader indicating potential self-harm risk based on chat interactions.

"He's alive and well. He's in ninth grade this year," Phillips confirms, describing how she spent that evening coordinating with the student's mother and local police. The incident, she believes, built crucial trust between her and the family, with the student now regularly greeting her in school hallways.

Growing Industry of AI Mental Health Tools

Phillips's district has utilized Alongside, an automated student monitoring system, for three years. This platform represents a growing category of tools marketed to K-12 schools, with at least nine companies securing funding deals since 2022. Alongside reports its services reach over 200 schools nationwide.

The company argues its platform surpasses typical telehealth options through its social and emotional skill-building chat feature, where students interact with a llama character named Kiwi that teaches resilience-building techniques. All AI-generated content receives monitoring by clinical professionals.

The Digital Couch: Why Students Prefer AI

Student nervousness significantly contributes to their comfort with AI confidants, according to school counselors and mental health professionals.

"Speaking with a mental health professional can be intimidating, especially for adolescents," explains Sarah Caliboso-Soto, a licensed clinical social worker and assistant director of clinical programs at the University of Southern California Suzanne Dworak-Peck School of Social Work.

Generational factors play a crucial role. Students raised with chat interfaces through social media and websites find AI interactions familiar. "Kids today find that it's easier to text than call someone on the phone," notes Linda Charmaraman, director of the Youth, Media & Wellbeing Research Lab at Wellesley Centers for Women.

AI interactions allow students to avoid watching facial expressions they might perceive as judgmental. Chatbots provide constant availability without appointment scheduling hassles. "It's almost more natural than interacting with another human being," Caliboso-Soto observes.

The Human Element: What AI Cannot Replace

Despite technological advantages, Caliboso-Soto expresses significant concerns about using AI as substitute counselors. "You can't replace human connection, human judgment," she emphasizes. While large language models can detect textual symptoms, they cannot perceive vocal inflections, body movements, or subtle behavioral cues that human clinicians observe.

Charmaraman warns against overreliance on AI for mental health support. The technology might miss nuanced human interactions and provide unrealistic positive reinforcement. She advocates for holistic approaches involving families and caregivers alongside technological tools.

Privacy and Practical Concerns

Privacy experts highlight that these chatbots generally lack the confidentiality protections of licensed therapist conversations. When student privacy concerns intersect with potential police involvement, these tools create "messy" privacy dilemmas even under clinical supervision.

Both companies and counselors stress that human oversight remains essential. Phillips notes her district pays approximately $10 per student annually for Alongside's basic services, with volume discounts for larger districts.

This school year, Phillips has documented 19 "severe" alerts from the AI health tool as of February, originating from 393 active users. The company doesn't separate incidents by student, meaning some individuals generate multiple alerts.

Testing Boundaries and Building Trust

Phillips has discovered that human perception remains crucial for interpreting teenage humor and boundary-testing. Middle school students, particularly boys, occasionally input alarming statements like "my uncle touches me" or "my mom beat me with a pole" to test whether anyone monitors the system.

"They're just trying to see if anyone is listening, to test whether anyone cares," Phillips explains. When she addresses these incidents personally, she observes body language changes that indicate whether concerns are genuine. Students making jokes typically become apologetic, while unremorseful behavior prompts parental notification.

Phillips appreciates having more intervention options than previous monitoring systems that automatically referred students to in-school suspension. As students recognize her active monitoring, trust develops, and boundary-testing incidents decrease annually.

The Social Accountability Question

"Can you think of another time in history when people have been so lonely, when our communities have been so weak?" asks Sam Hiner, executive director of Young People's Alliance, a North Carolina organization advocating for youth political participation.

Hiner identifies a "parasocial relationship" risk when students develop one-sided emotional attachments to therapeutic AI. While acknowledging some therapeutic value, he argues AI should never convey emotional states like saying "I'm proud of you" to encourage attachment.

Technology platforms often claim to decrease loneliness but primarily measure whether bots serve as "effective crutches for immediate feelings of loneliness" rather than fostering genuine human connection and social accountability, Hiner contends.

As schools navigate the complex intersection of technology and mental health support, the debate continues between technological efficiency and the irreplaceable value of human connection in student wellbeing.