The AI Paradox: Billions Invested, Half the Hardware Idle

In the relentless race to dominate artificial intelligence, the world's largest technology firms have adopted a singular strategy: build more. More chips, more data centers, more power. Global capital expenditure has surged into the hundreds of billions as companies scramble to scale their AI systems to unprecedented levels.

Analysts project that data center investment will continue its sharp upward trajectory, with major cloud providers alone directing tens of billions annually toward AI-specific infrastructure. Massive projects like hyperscale campuses and Elon Musk's proposed 'Terafab' chip complex in Texas underscore the insatiable demand for computational power that continues to outpace supply.

The $30,000 Paperweight Problem

Yet beneath this explosive growth lies a startling inefficiency. Jürgen Hatheier, Ciena's vice president of business development, reveals a critical bottleneck: "Even today, where those data centers are highly optimized, you still have 50 per cent of the time that those GPUs spend on doing something just waiting for data to arrive."

Consider the economics: a single high-end GPU costs between $20,000 and $30,000 and consumes approximately a kilowatt of power. When these chips spend half their operational time idle, the financial and energy waste becomes staggering. Across AI clusters comprising tens of thousands of such processors, this idle time translates into substantial drag on returns and environmental impact.

The Speed Mismatch: Thinking Fast, Sharing Slow

"The innovation on the compute side has happened... at a factor of three faster than what we have innovated on the connectivity side," Hatheier explains. This creates a fundamental mismatch: AI systems have become exceptionally quick at processing information but struggle to share data between systems at comparable speeds.

This bottleneck becomes increasingly problematic as workloads scale. Training large language models requires moving vast datasets between machine clusters, often across multiple data centers. In enterprise applications, datasets can reach tens of petabytes, meaning even high-capacity networks might require days or weeks to transfer information without proper optimization.

Inference—the day-to-day operation of AI tools—adds another layer of unpredictable, continuous demand. When networks cannot maintain pace, machines simply pause. "If you have... a machine that can't communicate with another machine fast enough, you are wasting energy... you have GPUs that are sitting there, you have power that is being burned for nothing," Hatheier emphasizes.

The Rise of Agentic AI Compounds the Problem

The emergence of agentic AI systems, where multiple AI agents coordinate tasks simultaneously, intensifies these challenges. In such environments, chips constantly exchange data. Industry research indicates that in these workloads, delays in data handling and orchestration—typically managed by CPUs and networks—can constitute the majority of system latency, leaving expensive computational resources severely underutilized.

For companies constructing AI infrastructure, this paradigm shift means that merely possessing the market's best chips no longer guarantees competitive advantage. What truly matters now is how rapidly these processors can be supplied with data and how efficiently they can collaborate.

Networking Becomes the New Battleground

This priority is already reshaping network construction strategies. Fiber routes and pre-deployed infrastructure are evolving into genuine competitive advantages. Operators increasingly invest ahead of demand, deploying capacity early in anticipation of future AI workloads.

"Any investment we are making now... it will be consumed," Hatheier predicts. "In the arms race of AI, it's all about velocity, how quickly you really get this connected."

The market reflects this shift, with networking companies like Ciena experiencing significant stock surges as investors position for a multi-year data infrastructure buildout. However, some analysts caution that current valuations already assume sustained demand from hyperscalers, leaving minimal margin for execution errors.

Broader Supply Chain Strains Emerge

The pressure extends beyond networking to the entire supply chain. In the United States, electricity infrastructure struggles to match data center demand, with equipment shortages, labor constraints, and rising costs delaying new capacity development.

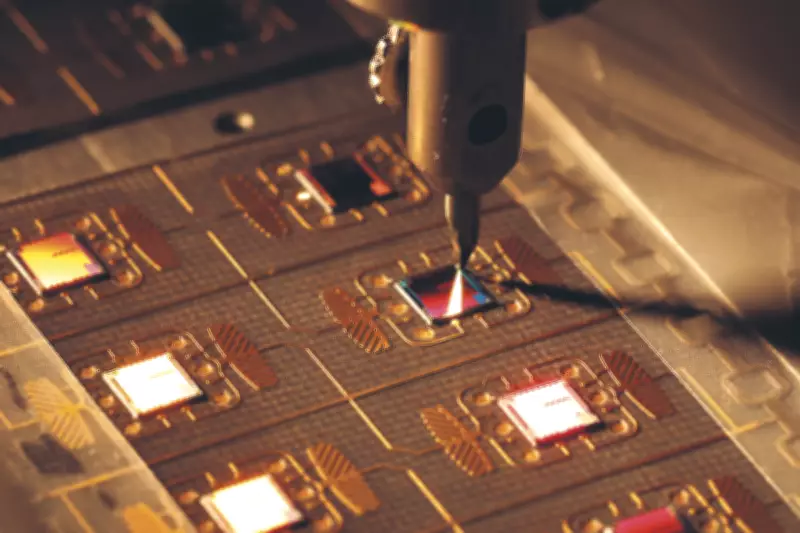

Semiconductor production faces parallel bottlenecks, as advanced manufacturing capacity continues to fall short of projected AI requirements. While Meta, Microsoft, and Alphabet dominate spending, enterprises increasingly experiment with proprietary AI infrastructure for enhanced data control and sovereignty. This includes deploying on-premise GPU clusters at substantial expense.

"There are tons, thousands of enterprises just buying a million dollar rack of GPUs and like 'yeah, I want this in house and I can do whatever I want with my data'," Hatheier observes.

This diversification broadens network pressure—more users, more data, more movement, often in unpredictable patterns. While training demand has become relatively well understood, inference remains a significant uncertainty. "On the inference side nobody knows... we don't know what the next application's going to be," he adds.

CPUs Emerge as Another Critical Constraint

Simultaneously, the growing role of CPUs in coordinating AI systems presents another constraint alongside connectivity. New workloads drive demand for server processors to manage data movement and task execution, with some estimates suggesting millions of CPUs will be necessary to support next-generation AI deployments.

Supply chains have struggled to maintain pace, with chip manufacturers already warning of shortages and extended lead times. Collectively, these factors paint a picture of a system under tremendous strain. While computational power dominates headlines, without efficient data movement infrastructure and supporting hardware to manage it, much of that massive investment risks remaining frustratingly idle.