Training teams to utilize artificial intelligence in professional settings has provided me with a unique vantage point into a burgeoning professional divide. Some individuals delegate all tasks to the machine, effectively ceasing to think independently. Others refuse to engage with it at all. However, a third cohort emerges—those who learn to collaborate with AI critically, treating it like a bright, enthusiastic intern that requires management and support to perform optimally.

The Critical Factor: Curiosity Over Technical Skill

The distinction among these groups rarely hinges on technical ability. Instead, it is curiosity that sets them apart—a willingness to experiment, make mistakes, and discern what AI genuinely excels at. From my experience, most people struggle with AI because they fundamentally misunderstand its nature. Users often oscillate between extremes: viewing AI as an infallible oracle or dismissing it entirely after a single error.

Current AI systems share as much with the human brain as a bird does with an Airbus A380. Both can achieve flight, but the similarities end there. Large Language Models simply predict words based on patterns in their training data. This explains their ability to produce fluent prose on well-documented topics while confidently fabricating information in unfamiliar territories.

Shifting Mindsets: From Shortcut to Skill

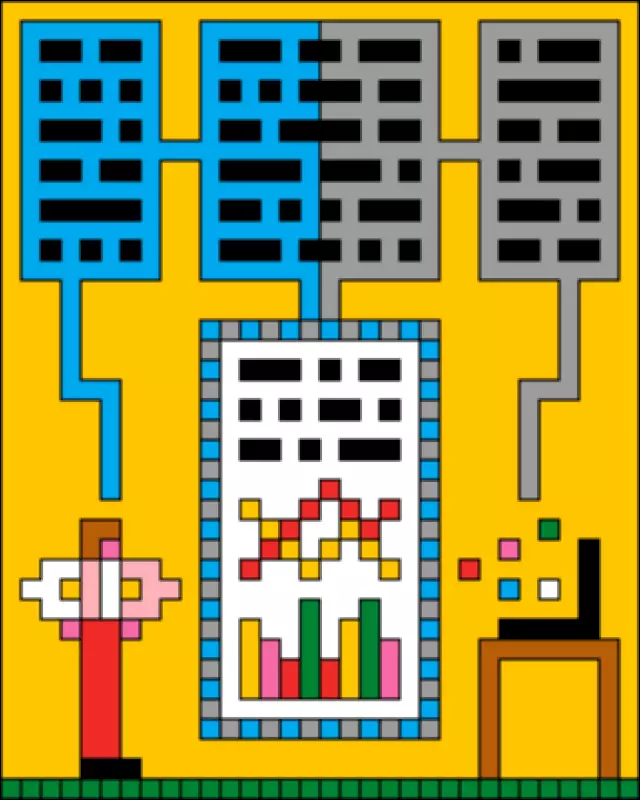

Once users grasp this reality, their approach transforms. They begin providing AI with clear objectives and proper context. When someone complains that all AI outputs are subpar, it typically stems from issuing generic prompts that yield generic answers. The most successful individuals treat AI not as a magical shortcut but as a skill to be honed. They engage in daily experimentation and reflect on improving results, aiming to make machines work for them rather than think for them.

This requires proactive, critical, and engaged usage. The skills essential for effective AI use—communication and delegation—are often already possessed by many. Just as one would not abandon an intern with a project, AI needs direction, feedback, and correction. As the manager, you bear ultimate responsibility for the output, embodying the "human in the loop" principle to ensure quality and accuracy.

Navigating Risks and Responsibilities

Outsourcing judgment to AI or entrusting it with sensitive data poses significant risks. For instance, a manager at a small retail chain once showcased an HR dashboard coded with AI, inadvertently importing confidential information without considering security protocols or policies. Beyond security, AI systems, trained on human-generated data, can perpetuate biases. It is advisable to avoid using AI for high-level subjective decisions, such as candidate screening, and instead focus on factual evaluations like verifying experience levels.

The Inevitable Impact of AI

Ignoring AI will not halt its profound environmental, ethical, and social impacts. In a session with an environmental charity, a director grappled with balancing organizational efficiency gains against moral costs, such as the carbon footprint of AI operations. AI is not a fleeting trend; it is already mid-journey. The critical question is who will steer its development. Cultivating AI-literate citizens is essential to demand responsible and democratic implementation.

The evolution of AI is accelerating at an unprecedented pace. Today's version is the least advanced it will ever be, with improvements occurring faster than many realize. Tasks deemed impossible a year ago are now routine, and predictions about AI writing most code are nearing reality. Unlike past technological revolutions, which unfolded over decades, breakthroughs now achieve global adoption in mere months.

Urgency in Adaptation and Governance

We lack the luxury of prolonged debates; our social and democratic responses must keep pace with technological advances to avoid being governed by poorly understood tools. Those who will shape AI's future need not be technologists alone. They can be anyone willing to experiment and take both capabilities and risks seriously. We all share a responsibility to understand AI and advocate for its equitable use in workplaces, communities, and governments, ensuring no one is left behind in this transformative era.