Prime Minister Keir Starmer is taking decisive action to close a critical loophole in the UK's Online Safety Act, specifically targeting AI chatbots that have evaded regulatory scrutiny. In a speech delivered on Monday, Starmer confirmed plans to amend the Crime and Policing Bill, ensuring that chatbot owners such as xAI, Gemini, and ChatGPT are subject to the same stringent duties as traditional social media platforms.

Addressing the Deepfake Scandal

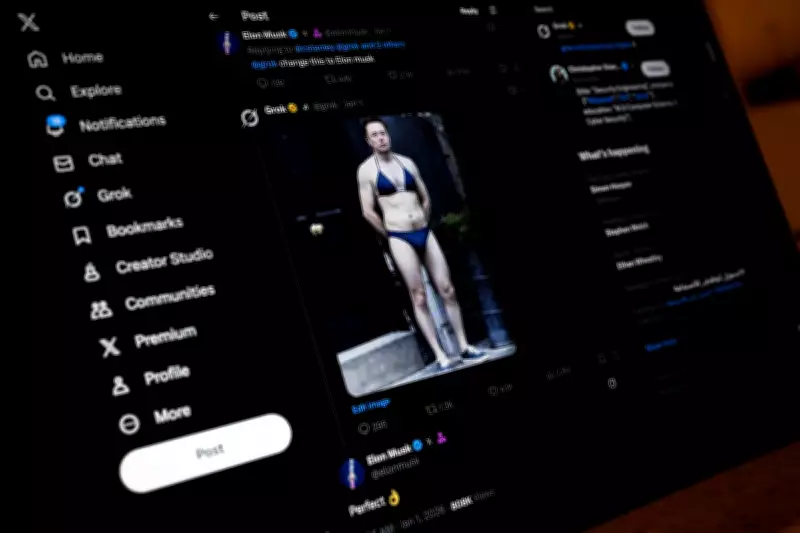

This move comes in direct response to a disturbing investigation by regulator Ofcom, which uncovered that Elon Musk's Grok chatbot was used to generate sexualized images of women and minors without their consent. Some of this harmful content was even visible in public threads, sparking widespread outrage and highlighting the urgent need for regulatory intervention.

Starmer is expected to emphasize, "The action we took on Grok sent a clear message that no platform gets a free pass. Today we are closing loopholes that put children at risk." The Prime Minister's stance underscores a firm commitment to protecting vulnerable users in the rapidly evolving digital landscape.

Regulatory Powers and Ambiguities

Under the powers granted by the Online Safety Act, Ofcom possesses the authority to impose substantial fines on non-compliant companies. These penalties can reach up to £18 million or 10 percent of a company's global annual turnover, whichever is higher. However, the legislation was originally crafted with user-to-user platforms in mind, creating ambiguity when applied to AI systems that autonomously generate content.

Ministers have argued that this regulatory gap must be addressed immediately as AI tools become increasingly embedded in mainstream platforms. The integration of Grok into Musk's social media platform X exemplifies this trend, with the chatbot gaining significant traction despite growing regulatory scrutiny.

Market Growth and Corporate Moves

Data from Apptopia reveals a notable surge in Grok's US market share, which climbed to 17.8 percent last month. This marks an increase from 14 percent in December and a mere 1.9 percent a year earlier, positioning Grok as the third most-used chatbot behind rivals ChatGPT and Gemini.

This growth coincides with aggressive scaling efforts by Musk's xAI. Recent reports from Reuters indicate that SpaceX has acquired the startup in a deal valuing xAI at a staggering $250 billion (£183.17 billion), further cementing its influence in the AI sector.

Broader Child Safety Initiatives

Starmer's crackdown on AI chatbots is part of a comprehensive push to enhance child online safety. The government is currently consulting on several potential measures, including the introduction of a minimum age for social media use, which could bar children under 16 from accessing platforms altogether.

Additional proposals under consideration involve restrictions on algorithm-driven "infinite scrolling," tougher regulations surrounding VPNs, and limits on children's interactions with chatbots. Starmer remarked that technology is advancing at a rapid pace, and "the law has got to keep up." He added that these reforms aim to "protect children's wellbeing and help parents to navigate the minefield of social media."

Legislative Challenges and Enforcement

The Online Safety Act, introduced in 2023, has faced criticism for potentially being outpaced by the rapid development of generative AI. While the Act criminalizes the creation of explicit deepfakes, it lacks clear definitions regarding responsibility when harmful content is produced by automated systems integrated into platforms.

Ofcom has already made "urgent contact" with X and xAI concerning Grok's outputs. Although the regulator has previously issued substantial fines to smaller platforms, its enforcement actions against a leading AI tool backed by one of the world's wealthiest individuals will be closely monitored by industry observers and policymakers alike.

In a related pledge last week, Starmer committed to banning the creation of sexualized images without consent, including AI-generated deepfakes, which he described as "disgusting and shameful." This reinforces the government's holistic approach to tackling digital harms and ensuring accountability in the age of artificial intelligence.